On-Premise Gitlab with autoscale Docker machine Microsoft Azure runners

Table of contents

This post is about the experience we gained last week while we were expanding our on-premise Gitlab installation with autoscale Docker machine runners located in the Microsoft Azure cloud.

Preface

We are running a quite large Gitlab installation for our development colleagues since about five years. Since march this year we are also using our Gitlab installation for CI/CD/CD (continuous integration/continuous delivery/continuous deployment) with Docker. Since our developers started to love the flexibility and the power of Gitlab in combination with Docker, the build jobs are continuously raising. After approximately six month we have run nearly 7000 pipelines and nearly 15000 jobs.

Some of the pipelines are running quite long, for example Maven builds or multi Docker image builds (micro services). Therefore we are running out of local on-premise Gitlab runners. To be fair, this would not be a huge problem because we have a really huge VM-Ware environment on site but we want test the Gitlab autoscaling feature in a real world, real life environment.

Our company is a Microsoft enterprise customer and therefore we have the possibility to just test this things in a little bit different environment than it is usual.

Cloud differences

As told beforehand, we have a more sophisticated on-premise cloud integration. Currently we have a site-to-site connection to Microsoft. Therefore we are able to use the Microsoft Azure cloud as it would be an offside office which is reachable over the WAN (wide area network).

Gitlab autoscale runner configuration

At first glance we just followed the instructions from the Gitlab documentation. The documentation is fairly enough especially with the corresponding docker+machine documentation for the Azure driver.

Important: Persist the docker+machine data! Otherwise every time the Docker Gitlab runner container restarts, your information about the created cloud VM-Ware machines will be lost!

Gitlab runner configuration

|

|

Forward, we have to go back!

At this point, the troubles were starting.

Gitlab autoscale runner Microsoft Azure authentication

We decided to run the Gitlab autoscaling runner with the Gitlab runner offical Docker image, as we do with all our other runners. After the basic Gitlab runner configuration (as provided in the documentation), the docker+machine driver will try to connect with the Microsoft Azure API and will start to authenticate the given subscription. Because we are running the Gitlab as Docker container, you have to show the logs to notice the Microsoft login instructions.

Important: The Microsoft Azure cloud login information is stored inside a hidden folder in the /root directory of the Gitlab runner container! You should really persist the /root folder, or you will have to authenticate every time you start the runner!

Important: Use the MACHINE_STORAGE_PATH environment variable to know where docker-machine stores the virtual machine inventory! Otherwise you will lost it every time the container restarts!

If you lost this step, you will have to relogin. You will notice this problem, if you look at the log messages of the container. Every five seconds, you will see a login attempt. This login attempt is only valid for a short time period and every time you will have to enter a new login code online. You can workaround this dead-lock situation, if you open an interactive shell into the Docker Gitlab autoscale runner and enter the docker-machine ls command. This command will wait until you have entered the provided code online.

Docker Gitlab autoscale swarm configuration

Important: This is a swarm compose file!

|

|

Microsoft Azure cloud routing table is deleted

Because we are connecting to the Microsoft Azure cloud from within our WAN, there are special routing tables configured in the Microsoft Azure cloud, because the network traffic has to find the way to the cloud and back from it to our site. After we started the first virtual machine we recognized, that we cannot connect to it. There was no chance to establish a ssh connection. After some research we found out, that the routing table was deleted. After some more test and drilling down the docker+machine source code we were able to pin the problem down to one Microsoft Azure API function. To get rid of the problem we wrote a small patch.

The pull request is available at Github and furthermore, you can also find all information there. If you like, please vote up the patch.

Microsoft Azure cloud storage accounts are not deleted

After we sailed around the first problem, we immediately hit the next one. The Github autoscale runner do what he is meant to do and create virtual machines and delete them. But the storage accounts are left over. As a result, your Microsoft Azure resource group will get messed up with orphaned storage accounts.

Yes, you guess it, I wrote the next patch and submitted a pull request at Github. You can read up all details there. If you like, please vote up the patch there :). Thank you.

Conclusion

After a lot of hacking, we are proud to have a full working Gitlab autoscale runner configuration with Microsoft Azure on-premise integration up and running. Regarding the issues found, we have also contacted our Microsoft enterprise cloud consultant to let Microsoft know, that there might be a bug with the Microsoft Azure API.

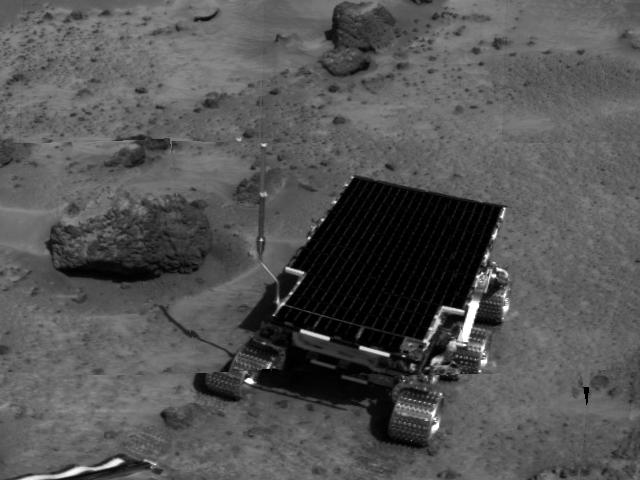

Blog picture image

The blog picture of this post shows the [Sojourner rover]() from the [Nasa Pathfinder]() mission. In my opinion an appropriate picture, because you will never know what you have to face on uncharted territory.