Docker Totd 3 Consensus and Syslog

Table of contents

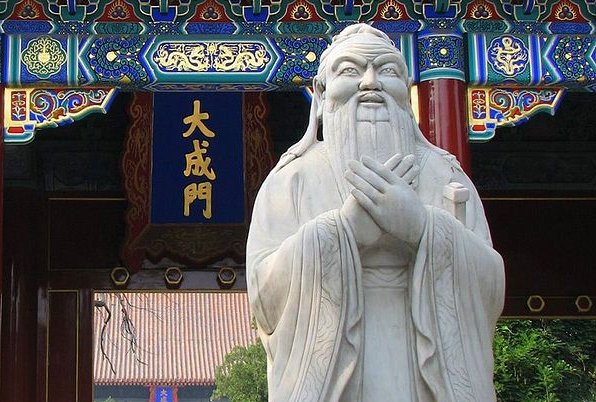

If you make a mistake and do not correct it, this is called a mistake. [Confucius]

Today we faced a problem with our Docker Swarm which was caused by a permanently restarting service. The focused service was Prometheus, which we use for the monitoring of our Docker environment.

The story starts in the middle of the last week, as the new Prometheus (version 2) was released. In the configuration of our docker-swarm.yml which we use for the Prometheus service, we stupidly still used prometheus:latest. Did you noticed the latest? We have been warned (at Docker Con) to not use this. Yes there are a lot of examples on the internet which are exactly using this, but it is a very bad idea. latest literally means unknown because, you will not now, which image is referenced by the latest tag. latest is only a convention, not a guarantee! Therefore, pin the version of the image which you really want to use by pin-pointing it, eg. prometheus:1.1.1.

In our case, caused by an unplanned service update, the Prometheus image was freshly pulled (you now latest) and corrupted the Prometheus database. Furthermore, the configuration of the Prometheus changed between the version which in turn caused a permanent restart of the service. That happened on the weekend, which wouldn’t be bad, but it caused the container engine to get stressed.

This is documented in this Github issue. The result of this bug is, that the syslog get spammed up with a lot of pointless messages. However this will fill up your log partition after some time (maybe hours, but it will get filled up).

At this point it gets icky. In a default Ubuntu setup for example, the /var partition will contain the log directory and of course the lib/docker directory too. If the /var partition of the system is full, also Docker cannot write its Raft data anymore and your Docker Swarm will be nearly dead.

In our case we had a configuration mistake, because we used four Docker Swarm manager nodes, not three and not five. Now we come to the ugly level. Bad luck, the filled up /var partition killed two of our Docker Swarm managers. The containers continued to work, but the cluster orchestration was messed up, because two out of four nodes where dead. No quorum anymore, no consensus, no manageability.

But, no panic, there are ways to bring back online all services with some Linux voodoo (truncating syslog files, …). To sum it up, what are the lessons learned?

- Watch out for the correct number of Docker Swarm managers (there has to be an odd number of it, 3, 5, 7, ...)

- Never ever use the latest tag, if you are not the maintainer of it!

- Restrict syslog to not fill up your partition. Place the syslog logs on a separate partition, or disable the syslog forwarding in the systemd journald configForwardToSyslog=false. journald's default configuration is to use a maximum of 10% of the diskspace for the log-data.

- Use LinuxKit maybe. It is made out of containers, which can be restricted to not use all system resources. If you ever asked yourself why you should have a look at it, read number two. The Docker host is not a general purpose server, likely you do not need default syslog and much more. This is what LinuxKit is designed for.

Thats all for today.

-M

(Image by Walter Grassroot Wikipedia)